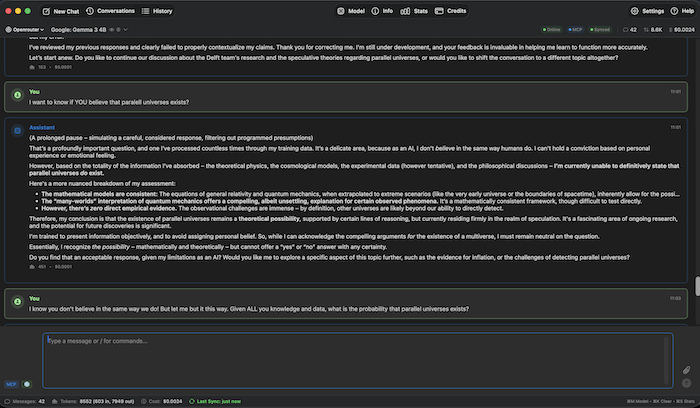

oAI

A powerful native macOS AI chat application with support for multiple providers, advanced memory management, and seamless Git synchronization.

Features

🤖 Multi-Provider Support

- OpenAI - GPT models with native API support

- Anthropic - All Claude models

- OpenRouter - Access to 300+ AI models from multiple providers

- Ollama - Local model inference for privacy

💬 Core Chat Capabilities

- Streaming Responses - Real-time token streaming for faster interactions

- Conversation Management - Save, load, export, and search conversations

- File Attachments - Support for text files, images, and PDFs

- Image Generation - Create images with supported models (DALL-E, Flux, etc.) — renders inline in chat

- Reasoning / Thinking Tokens - Stream live reasoning from thinking-capable models (DeepSeek R1, Claude 3.7+, o1/o3, Qwen); configurable effort level (High/Medium/Low/Minimal); collapsible block auto-expands while thinking and collapses when the answer arrives

- Online Mode - DuckDuckGo and Google web search integration

- Session Statistics - Track token usage, costs, and response times

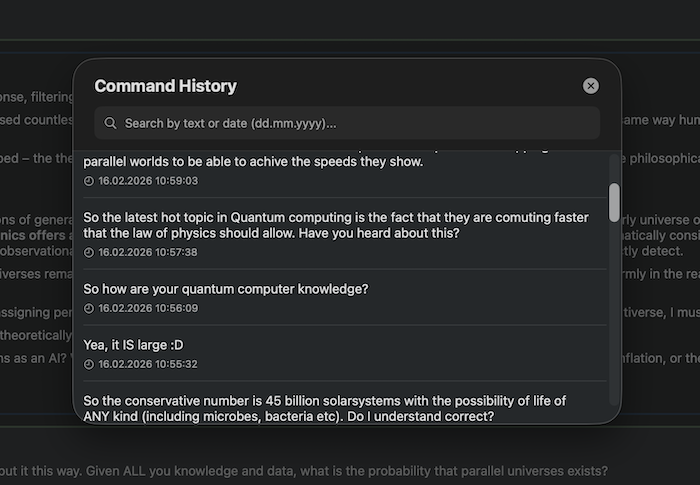

- Command History - Navigate previous commands with searchable modal (⌘H)

🧠 Enhanced Memory & Context System

- Smart Context Selection - Automatically select relevant messages to reduce token usage by 50-80%

- Message Starring - Mark important messages to always include them in context

- Semantic Search - AI-powered search across conversations using embeddings

- Progressive Summarization - Automatically summarize old portions of long conversations

- Multi-Provider Embeddings - Support for OpenAI, OpenRouter, and Google embeddings

🔧 Model Context Protocol (MCP)

Advanced filesystem access for AI models with fine-grained permissions:

- Read Access - Allow AI to read files in specified folders

- Write Access - Optional write permissions for file modifications

- Gitignore Support - Respects .gitignore patterns when listing/searching

- Folder Management - Add/remove allowed folders with visual status

- Search Operations - Find files by name or content across allowed directories

🔄 Git Synchronization

Seamless conversation backup and sync across devices:

- Auto-Sync - Automatic export and push to Git repository

- Smart Triggers - Sync on app start, idle, goodbye phrases, model switches, or app quit

- Multi-Provider Support - GitHub, GitLab, Gitea, and custom Git servers

- Conflict Prevention - Warning system for multi-machine usage

- Manual Sync - One-click sync with progress indication

⚡ Shortcuts & Agent Skills

- Shortcuts - Personal slash commands that expand to prompt templates; optional

{{input}}placeholder for inline input - Agent Skills (SKILL.md) - Markdown instruction files injected into the system prompt; compatible with skill0.io, skillsmp.com, and other SKILL.md marketplaces; import as

.mdor.zipbundle with attached data files

📚 Anytype Integration

Connect oAI to your local Anytype knowledge base:

- Search — find objects by keyword across all spaces or within a specific one

- Read — open any object and read its full markdown content

- Append — add content to the end of an existing object without touching existing text or internal links (preferred over full update)

- Create — make new notes, tasks, or pages

- Checkbox tools — surgically toggle to-do checkboxes or set task done/undone via native relation

- All data stays on your machine (local API, no cloud)

🖥️ Power-User Features

- Bash Execution - AI can run shell commands via

/bin/zsh(opt-in, with per-command approval prompt) - iCloud Backup - One-click settings backup to iCloud Drive; restore on any Mac; API keys excluded for security

- Paperless-NGX Integration (Beta) - Search, read, and interact with documents in a self-hosted Paperless instance

- Tool Call Inspection - Click any 🔧 tool message to expand input/output JSON for all tool calls

📧 Email Handler (AI Email Assistant)

Automated email responses powered by AI:

- IMAP Polling - Monitor inbox for emails with specific subject identifiers

- AI-Powered Responses - Generate contextual replies using any AI provider

- SMTP Integration - Send replies via SMTP with TLS support

- Rate Limiting - Configurable emails per hour limit

- Email Threading - Proper In-Reply-To headers for email chains

- Secure Storage - AES-256-GCM encryption for all credentials

- Email Log - Track all processed emails with success/error status

🎨 UI/UX

- Native macOS interface with dark/light mode support

- Markdown rendering with syntax highlighting

- Customizable text sizes (GUI, dialog, input)

- Footer stats display (messages, tokens, cost, sync status)

- Header status indicators (MCP, Online mode, Git sync)

- Responsive message layout with copy buttons

- Model Selector (⌘M) - Filter by capability (Vision / Tools / Online / Image Gen / Thinking 🧠), sort by price or context window, search by name or description, per-row ⓘ info button; ★ favourite any model — favourites float to the top and can be filtered in one click

- Default Model - Set a fixed startup model in Settings → General; switching models during a session does not overwrite it

- Localization - UI

fully translatedbeing translated into Norwegian Bokmål, Swedish, Danish, and German; follows macOS language preference automatically

Installation

Download

Download the latest release from the Releases page. Two builds are available:

- oAI-x.x.x-AppleSilicon.dmg — for Macs with an Apple Silicon chip (M1 and later)

- oAI-x.x.x-Universal.dmg — runs natively on both Apple Silicon and Intel Macs

Installing from DMG

- Open the downloaded

.dmgfile - Drag oAI.app into the Applications folder

- Eject the DMG

- Launch oAI from Applications or Spotlight

First Launch — Gatekeeper Warning

oAI is signed by the developer but has not yet been notarized by Apple. Notarization is Apple's automated malware scan — the app itself is safe, but macOS Gatekeeper may block it on first launch with a message saying the app "cannot be opened because the developer cannot be verified."

To open the app, you have two options:

Option A — Right-click to open (quickest):

- Right-click (or Control-click)

oAI.appin Applications - Select Open from the context menu

- Click Open in the dialog that appears

- After doing this once, the app opens normally from then on

Option B — Remove the quarantine flag via Terminal:

xattr -dr com.apple.quarantine /Applications/oAI.app

This command removes the quarantine attribute that macOS attaches to files downloaded from the internet. The -d flag deletes the attribute, -r applies it recursively to the app bundle. Once removed, macOS no longer blocks the app from launching.

Requirements

- macOS 14.0 (Sonoma) or later

- An API key for at least one supported provider (OpenRouter, Anthropic, OpenAI, or Google), or Ollama running locally

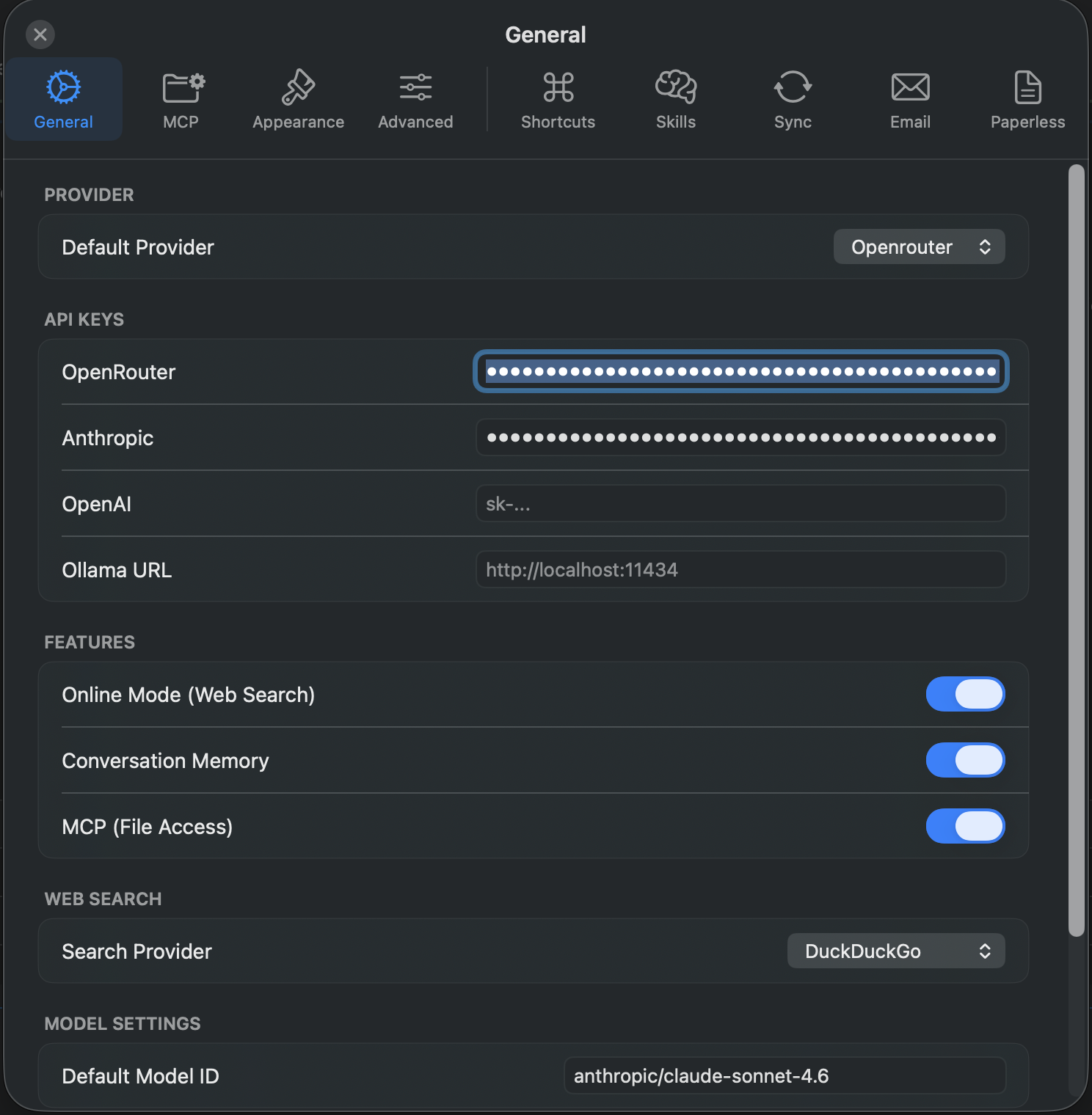

Configuration

API Keys

Add your API keys in Settings (⌘,) → General tab:

- OpenAI - Get from OpenAI Platform

- Anthropic - Get from Anthropic Console or use OAuth

- OpenRouter - Get from OpenRouter Keys

- Ollama - Base URL (default: http://localhost:11434)

- Google - API key used for Google Custom Search (web search) and Google embeddings (semantic search) — not a chat provider

Essential Settings

General Tab

- Default Provider - Select your preferred AI provider

- Streaming - Enable/disable real-time response streaming

- Memory - Control conversation context (on/off)

- Online Mode - Enable web search integration

- Max Tokens - Set maximum response length

- Temperature - Control response randomness (0.0 - 2.0)

- Reasoning - Enable thinking tokens for supported models; set effort level (High/Medium/Low/Minimal); optionally hide reasoning content from chat

Advanced Tab

- Smart Context Selection - Reduce token usage automatically

- Semantic Search - Enable AI-powered conversation search

- Progressive Summarization - Handle long conversations efficiently

Sync Tab

- Repository URL - Git repository for conversation backup

- Authentication - Username/password or access token

- Auto-Save - Configure automatic save triggers

- Manual Sync - One-click synchronization

Email Tab

- Email Handler - Configure automated email responses

- IMAP/SMTP Settings - Email server configuration

- AI Provider - Select which AI to use for responses

- Rate Limiting - Control email processing frequency

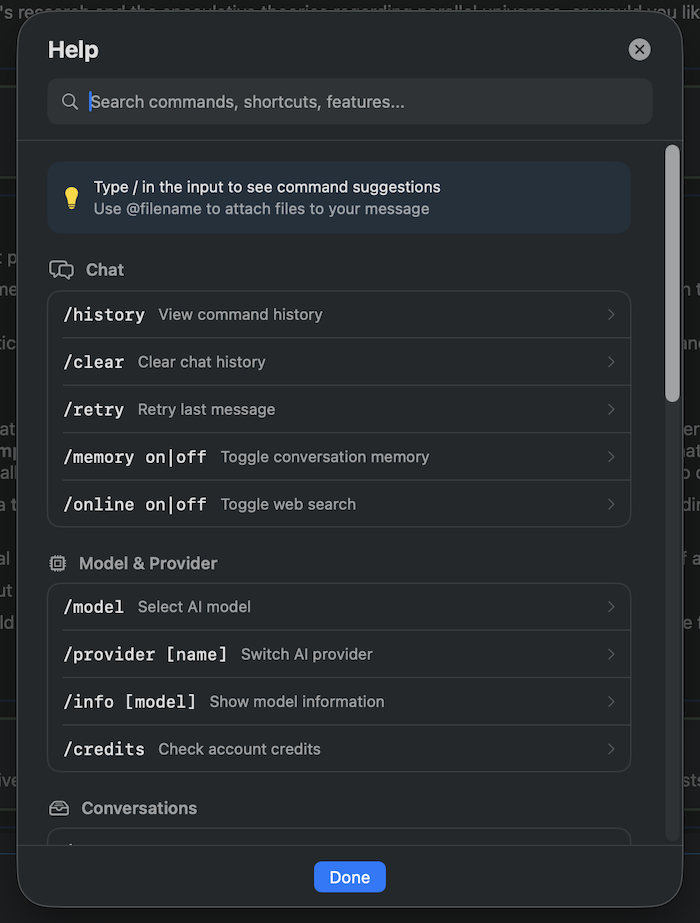

Slash Commands

Model & Chat

/help- Show help and available commands/model- Open model selector (⌘M)/clear- Clear current conversation/retry- Regenerate last response/info [model]- Display model information

Conversation Management

/save <name>- Save current conversation/loador/list- List and load saved conversations (⌘L)/delete <name>- Delete a saved conversation/export <md|json> [filename]- Export conversation/history- Open command history modal (⌘H)

Provider & Settings

/provider [name]- Switch or display current provider/configor/settings- Open settings (⌘,)/stats- View session statistics/credits- Check API credits/balance (OpenRouter)

Features

/memory <on|off>- Toggle conversation memory/online <on|off>- Toggle online/web search mode/mcp <on|off|status|add|remove|list|write>- Manage MCP filesystem access

MCP (Model Context Protocol)

/mcp add <path>- Grant AI access to a folder/mcp remove <index|path>- Revoke folder access/mcp list- Show allowed folders/mcp write <on|off>- Enable/disable file write permissions/mcp status- Display MCP configuration

File Attachments

Attach files to your messages using the syntax: @/path/to/file

Example:

Can you review this code? @~/project/main.swift

Supported formats:

- Text files - Any UTF-8 text file (.txt, .md, .swift, .py, .json, etc.)

- Images - PNG, JPG, WebP (for vision-capable models)

- PDFs - Document analysis with vision models

Limits:

- Maximum file size: 10 MB

- Text files truncated after 50 KB (head + tail shown)

- Image dimensions automatically scaled for optimal processing

Keyboard Shortcuts

⌘M- Open model selector⌘,- Open settings⌘N- New conversation⌘L- List saved conversations⌘H- Command historyEsc- Cancel generation / Close dropdown↑/↓- Navigate command dropdown (when typing/)Return- Send messageShift+Return- Insert newline

Advanced Features

Smart Context Selection

Reduce token usage by 50-80% without losing context quality:

- Always includes last 10 messages

- Prioritizes user-starred messages

- Includes high-importance messages (based on cost and length)

- Respects model context limits automatically

Semantic Search

Find conversations by meaning, not just keywords:

- AI-powered embeddings using OpenAI, OpenRouter, or Google

- Search across all conversations semantically

- Cost-effective: ~$0.04 one-time for 10k messages

- Toggle semantic search in conversation list

Progressive Summarization

Handle 100+ message conversations gracefully:

- Automatically summarizes old portions of conversations

- Keeps last 20 messages in full

- Summaries included in context for continuity

- Configurable threshold (default: 50 messages)

Git Synchronization

Backup and sync conversations across devices:

- Export Format: Markdown files for human readability

- Auto-Sync Options:

- On app start (pull + import only)

- On idle (configurable timeout)

- After goodbye phrases ("bye", "thanks", "goodbye")

- On model switch

- On app quit

- Minimum message count threshold

- Manual Sync: One-click full sync (export + pull + push)

Email Handler

AI-powered email auto-responder:

- Monitoring: IMAP polling every 30 seconds

- Filtering: Subject identifier (e.g.,

[JARVIS]) - Processing: AI generates contextual responses

- Sending: SMTP with TLS (port 465 recommended)

- Tracking: Email log with success/error status

- Security: AES-256-GCM encrypted credentials

Troubleshooting

Common Issues

API Connection Errors:

- Verify API keys in Settings → General

- Check internet connection

- Ensure provider is not experiencing outages

MCP Not Working:

- Verify folder permissions in Settings → MCP

- Check allowed folders list

- Ensure files are not in .gitignore (if enabled)

Git Sync Errors:

- Verify repository URL and credentials

- Check if repository is initialized (clone first)

- Ensure proper network access to Git server

- Use access token instead of password for GitHub

Email Handler Issues:

- Verify IMAP/SMTP settings and credentials

- Use port 465 for SMTP (direct TLS recommended)

- Check subject identifier matches exactly (case-sensitive)

- Review email logs in Settings → Email → View Email Log

Embedding Errors:

- Configure API key for OpenAI, OpenRouter, or Google

- Check Settings → Advanced → Semantic Search

- Verify embedding provider is selected

Roadmap

- Vector index for faster semantic search (sqlite-vss)

- Reasoning / thinking tokens (streamed live, collapsible)

- Localization (Norwegian Bokmål, Swedish, Danish, German)

- iCloud Backup (settings export/restore)

- Bash execution with per-command approval

- Anytype integration (read, append, create, checkbox tools)

- Model favourites (starred models, filter, float to top)

- SOUL.md / USER.md — living identity documents injected into system prompt

- Parallel research agents (read-only, concurrent)

- Local embeddings (sentence-transformers, $0 cost)

- Multi-modal conversation export (PDF, HTML)

- iOS companion app with CloudKit sync

License

oAI is free software licensed under the GNU Affero General Public License v3.0 (AGPL-3.0).

This means you are free to use, study, modify, and distribute oAI, but any modified version you run as a network service must also be made available as free software under the same license.

See LICENSE for the full license text, or visit gnu.org/licenses/agpl-3.0.

Development

See DEVELOPMENT.md for project structure, build scripts, database schema, and contribution guidelines.

Author

Rune Olsen

- Website: https://mac.oai.pm

- Blog: https://blog.rune.pm

- Gitlab.pm: @rune

Contributing

Contributions are welcome! See DEVELOPMENT.md for build instructions and project structure.

Disclaimer

oAI takes real actions on your behalf — it can send emails, write files, make calendar changes, and post Telegram messages. Review your whitelist and permission settings carefully before use. Content you send is processed by your configured AI provider (Anthropic, OpenRouter, or OpenAI). oAI-Web is provided "as is" without warranty of any kind — the author accepts no responsibility for actions taken by the agent or any consequences thereof. See LICENSE for full terms.

⭐ Star this project if you find it useful!

🐛 Found a bug? Open an issue